Review — CLIP: Learning Transferable Visual Models From Natural Language Supervision | by Sik-Ho Tsang | Medium

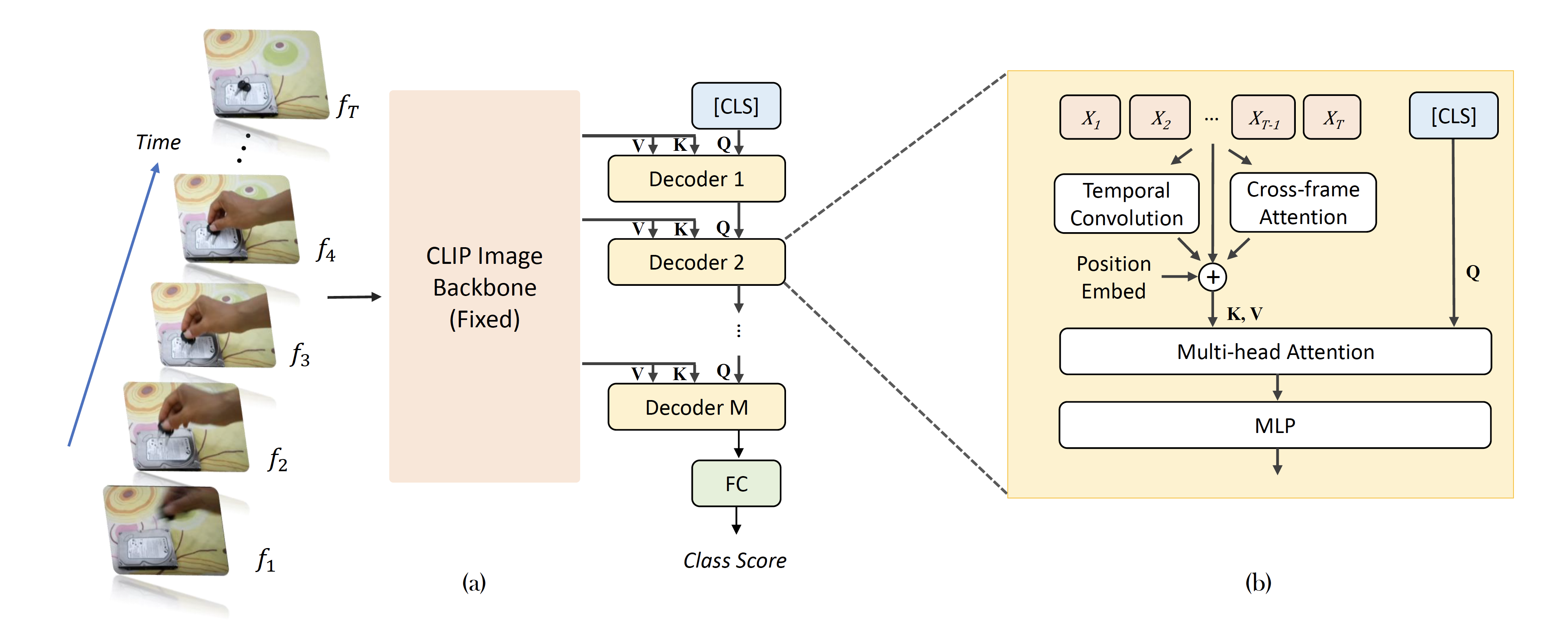

CLIP Itself is a Strong Fine-tuner: Achieving 85.7% and 88.0% Top-1 Accuracy with ViT-B and ViT-L on ImageNet – arXiv Vanity

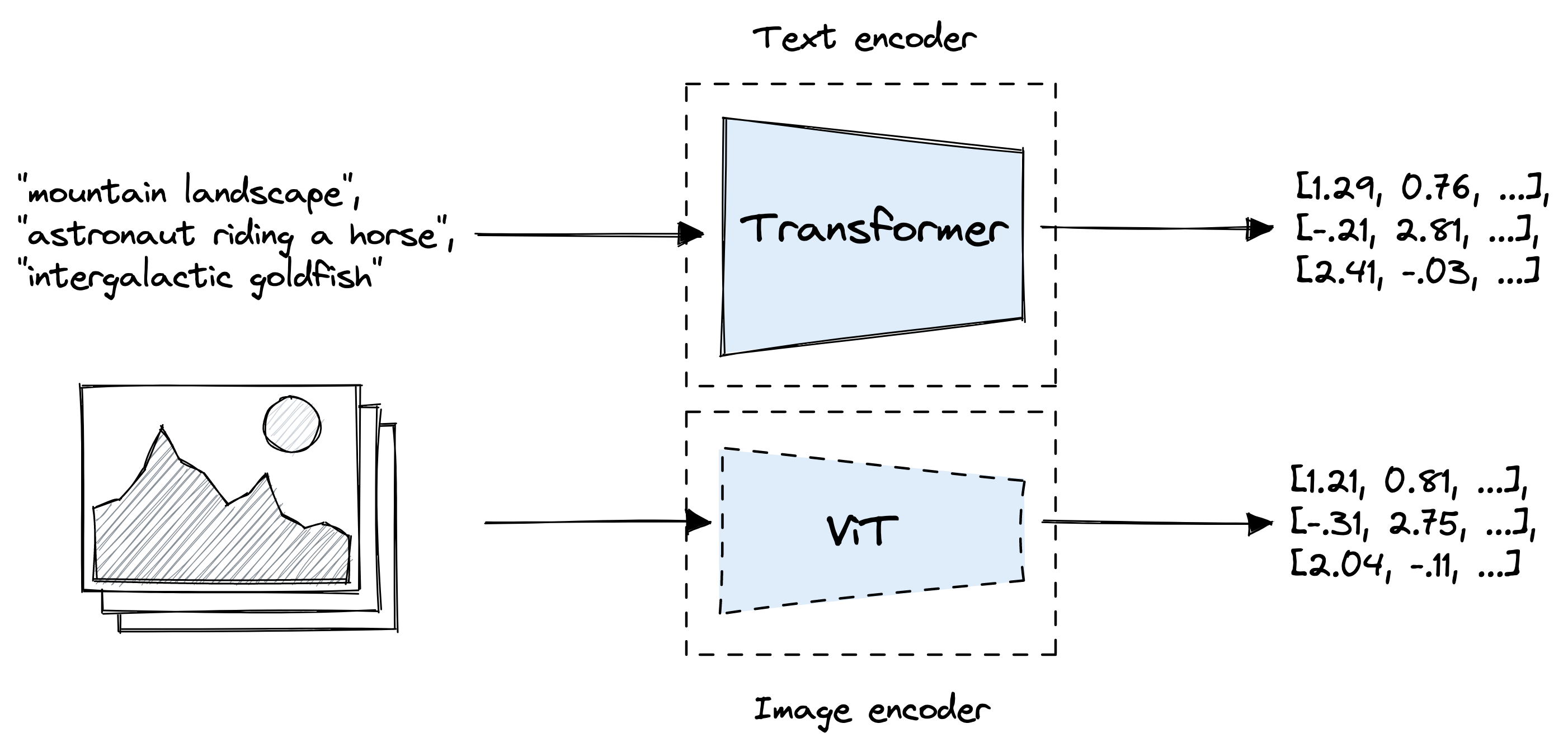

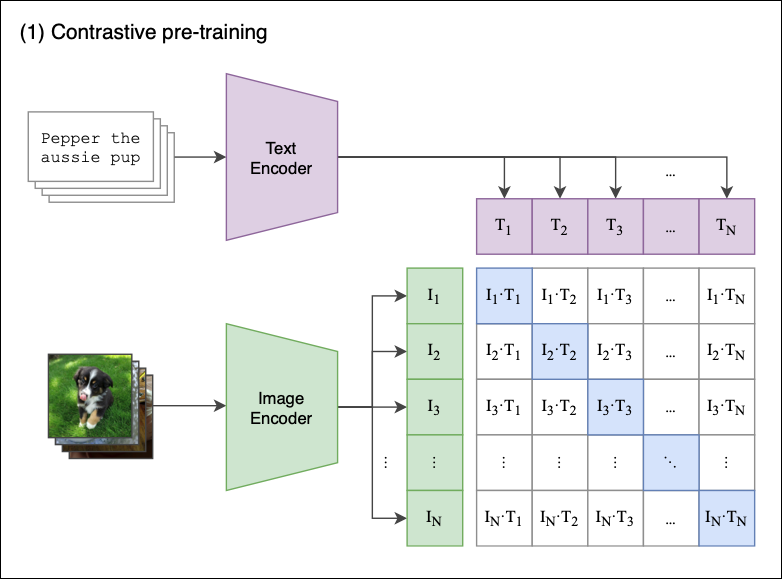

CLIP: The Most Influential AI Model From OpenAI — And How To Use It | by Nikos Kafritsas | Towards Data Science

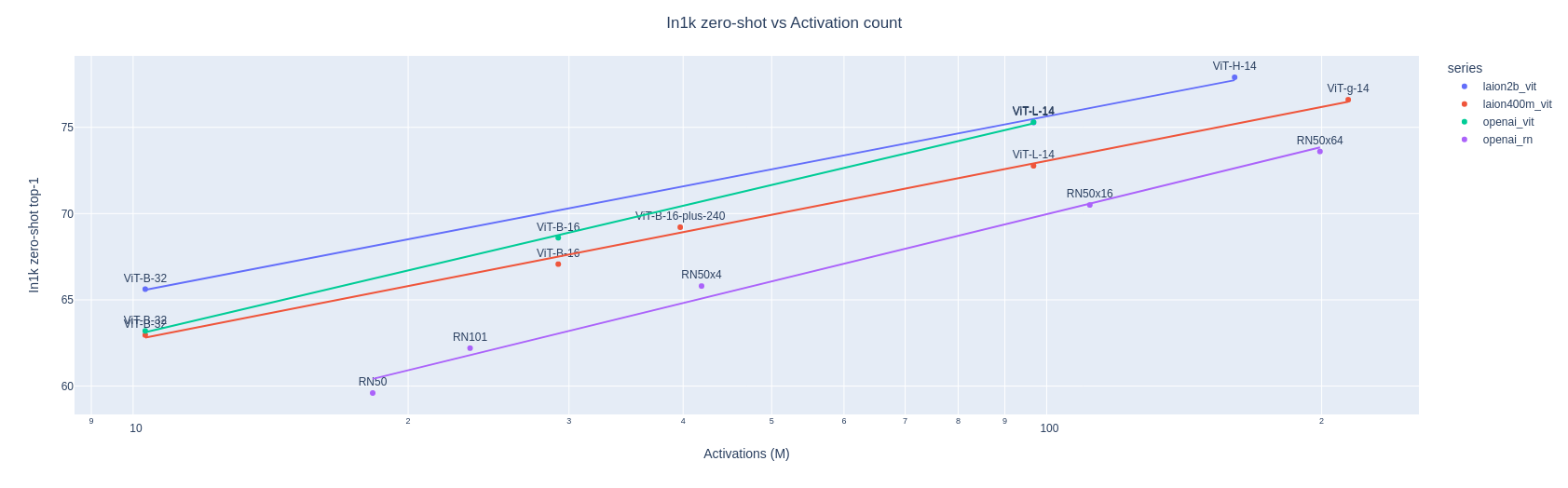

apolinário (multimodal.art) on Twitter: "Yesterday OpenCLIP released the first LAION-2B trained perceptor! a ViT-B/32 CLIP that suprasses OpenAI's ViT-B/32 quite significantly: https://t.co/X4vgW4mVCY https://t.co/RLMl4xvTlj" / Twitter

Amazon.com: Chip Clips, Chip Clips Bag Clips Food Clips, Bag Clips for Food, Chip Bag Clip, Food Clips, PVC-Coated Clips for Food Packages, Paper Clips, Clothes Pin(Mixed Colors 30 PCs) : Office

Principal components from PCA were computed on Clip-ViT-B-32 embeddings... | Download Scientific Diagram